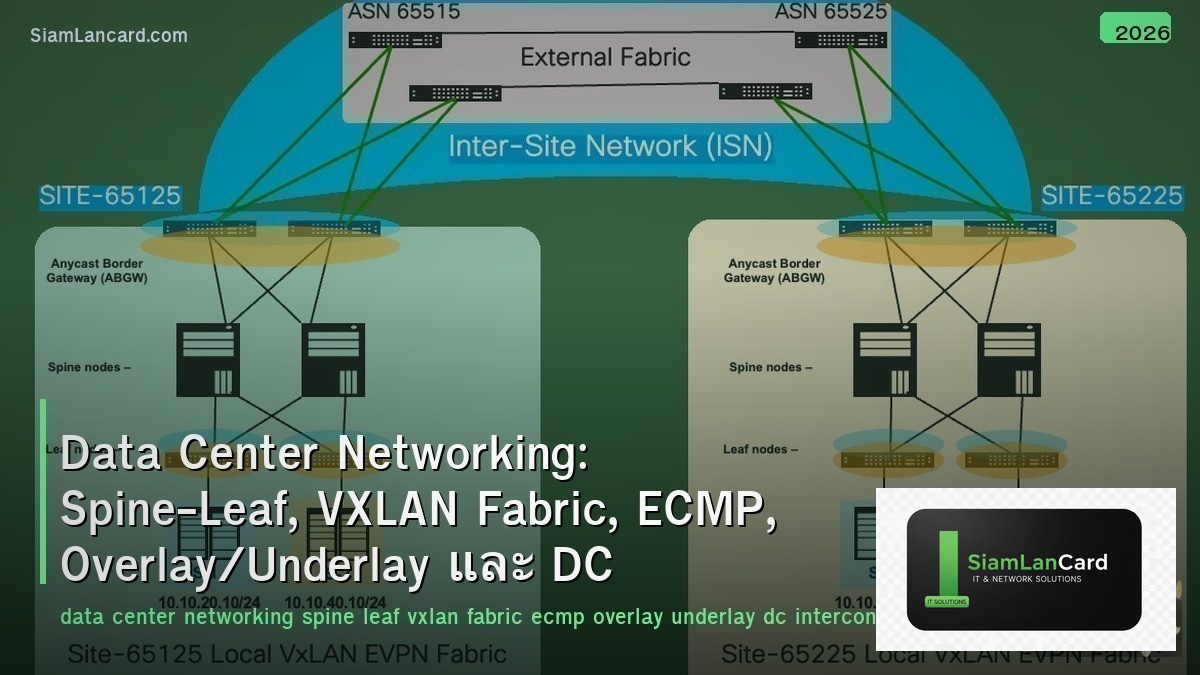

Home » Data Center Networking: Spine-Leaf, VXLAN Fabric, ECMP, Overlay/Underlay และ DC Interconnect

Data Center Networking: Spine-Leaf, VXLAN Fabric, ECMP, Overlay/Underlay และ DC Interconnect

Data Center Networking: Spine-Leaf, VXLAN Fabric, ECMP, Overlay/Underlay และ DC Interconnect

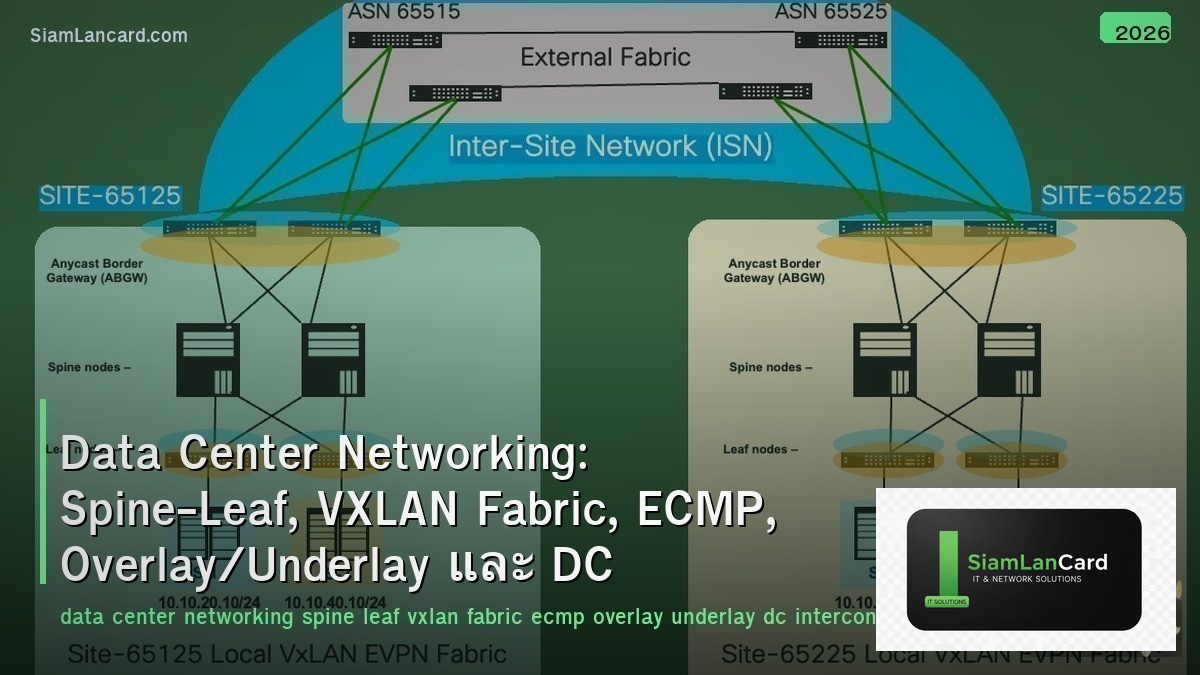

Data Center Networking ออกแบบเพื่อ high bandwidth, low latency และ scalability Spine-Leaf topology ให้ consistent latency ทุก path, VXLAN Fabric สร้าง overlay network สำหรับ multi-tenancy, ECMP กระจาย traffic ข้ามหลาย paths, Overlay/Underlay แยก logical network จาก physical และ DC Interconnect เชื่อมต่อ data centers เข้าด้วยกัน

Traditional 3-tier architecture (Core-Distribution-Access) ไม่เหมาะกับ modern data centers: East-West traffic (server-to-server) มากกว่า North-South (client-to-server) 80:20, STP blocks redundant links (waste bandwidth), latency ไม่ consistent (depends on path), ไม่ scale ง่าย Spine-Leaf + VXLAN แก้ทุกปัญหา: ทุก path active (ECMP), consistent 2-hop latency, overlay decouples logical from physical

Spine-Leaf Topology

| Feature |

รายละเอียด |

| Structure |

Every leaf connects to every spine — 2-tier folded Clos topology |

| Leaf (ToR) |

Top-of-Rack switches — connect servers, storage, firewalls |

| Spine |

Aggregation layer — connect all leaves, no devices directly attached |

| Latency |

Consistent: any server reaches any other server ใน exactly 2 hops (leaf → spine → leaf) |

| No STP |

All links active — L3 routing (BGP/OSPF) + ECMP instead of STP |

| Scale |

Add more spines for bandwidth, more leaves for ports — horizontal scaling |

| Oversubscription |

Spine bandwidth = leaf uplinks × number of leaves → design for 3:1 to 1:1 ratio |

VXLAN (Virtual Extensible LAN)

| Feature |

รายละเอียด |

| คืออะไร |

Overlay protocol: encapsulate L2 frames in UDP/IP → extend L2 over L3 underlay |

| VNI |

VXLAN Network Identifier (24-bit) → 16 million segments (vs VLAN 4096) |

| VTEP |

VXLAN Tunnel Endpoint — leaf switch ที่ encap/decap VXLAN |

| Encapsulation |

Original frame + VXLAN header (8B) + UDP (8B) + outer IP (20B) + outer Ethernet (14B) = 50B overhead |

| MTU |

ต้อง increase MTU to 9000+ (jumbo frames) บน underlay เพื่อรองรับ overhead |

| UDP Port |

Destination port 4789 (IANA assigned) |

| Control Plane |

Multicast (flood-and-learn), ingress replication, หรือ BGP EVPN (preferred) |

BGP EVPN (Control Plane for VXLAN)

| Feature |

รายละเอียด |

| คืออะไร |

BGP address family ที่ distribute MAC/IP reachability สำหรับ VXLAN fabric |

| Route Types |

Type 2 (MAC/IP), Type 3 (multicast), Type 5 (IP prefix) — most important types |

| Advantage |

No flooding: learn MAC/IP via BGP → directed forwarding (unicast only) |

| ARP Suppression |

VTEP answers ARP locally (from BGP-learned info) → reduce ARP flooding |

| Multi-Tenancy |

VRF per tenant → L3 VNI for inter-VXLAN routing → complete isolation |

| Symmetric IRB |

Integrated Routing and Bridging: route at ingress AND egress VTEP → optimal path |

ECMP (Equal-Cost Multi-Path)

| Feature |

รายละเอียด |

| คืออะไร |

Load balance traffic across multiple equal-cost paths → use all spine links |

| Hash |

5-tuple hash (src IP, dst IP, src port, dst port, protocol) → per-flow load balancing |

| Paths |

Spine-leaf: each leaf has N paths (one per spine) → N-way ECMP |

| Polarization |

Problem: same hash at leaf and spine → traffic uses same path → uneven distribution |

| Fix Polarization |

Different hash seeds at each layer, unique router-id, entropy in VXLAN UDP source port |

| BGP Multipath |

maximum-paths [N] — allow multiple BGP next-hops ใน routing table |

Overlay vs Underlay

| Feature |

Underlay |

Overlay |

| What |

Physical network (IP routing between VTEPs) |

Virtual network (VXLAN tunnels carrying tenant traffic) |

| Protocol |

OSPF, BGP, IS-IS (L3 routing) |

VXLAN + BGP EVPN (L2/L3 overlay) |

| Addressing |

Loopback IPs for VTEPs, P2P links |

Tenant IPs, MAC addresses, VNIs |

| Design |

Simple: L3 everywhere, ECMP, no STP |

Complex: VNI mapping, EVPN routes, IRB |

| Change |

Rarely changes (stable infrastructure) |

Frequently changes (tenant adds/moves) |

DC Interconnect (DCI)

| Method |

How |

Use Case |

| VXLAN over WAN |

Extend VXLAN fabric across DCI link → same VNI in both DCs |

VM mobility, disaster recovery |

| EVPN Multi-Site |

BGP EVPN between DC border leaves → selective route exchange |

Large-scale multi-DC (Cisco, Arista) |

| OTV (Overlay Transport Virtualization) |

Cisco: extend L2 over L3 WAN without STP |

Legacy DCI (being replaced by EVPN) |

| MPLS/VPLS |

Service provider L2VPN between DCs |

Carrier-managed DCI |

| SD-WAN |

Overlay WAN connecting DC sites |

Branch-to-DC and DC-to-DC connectivity |

ทิ้งท้าย: Modern DC = Spine-Leaf + VXLAN EVPN

Data Center Networking Spine-Leaf: 2-tier Clos, every leaf to every spine, consistent 2-hop latency, no STP, ECMP VXLAN: L2 over L3 overlay, VNI (16M segments), VTEP (encap/decap), 50B overhead, MTU 9000+ BGP EVPN: control plane for VXLAN, MAC/IP learning via BGP, ARP suppression, symmetric IRB, multi-tenancy ECMP: all paths active, 5-tuple hash, fix polarization with different seeds/entropy Overlay/Underlay: underlay = simple L3 (OSPF/BGP), overlay = complex tenant networks (VXLAN/EVPN) DCI: EVPN Multi-Site (preferred), VXLAN over WAN, OTV (legacy), MPLS/VPLS (carrier) Key: spine-leaf + VXLAN EVPN = standard modern DC architecture — all links active, scalable, multi-tenant

อ่านเพิ่มเติมเกี่ยวกับ VXLAN EVPN Fabric BGP EVPN Data Center และ Network Design Patterns Spine-Leaf Campus ที่ siamlancard.com หรือจาก icafeforex.com และ siam2r.com