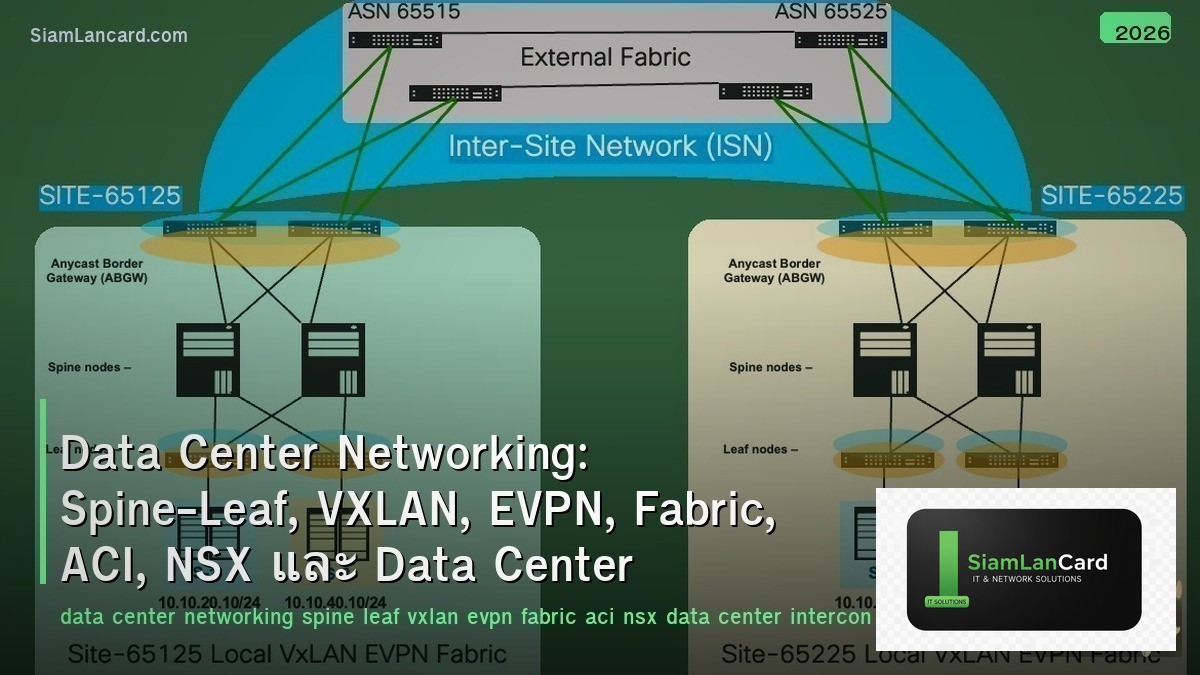

Home » Data Center Networking: Spine-Leaf, VXLAN, EVPN, Fabric, ACI, NSX และ Data Center Interconnect

Data Center Networking: Spine-Leaf, VXLAN, EVPN, Fabric, ACI, NSX และ Data Center Interconnect

Data Center Networking: Spine-Leaf, VXLAN, EVPN, Fabric, ACI, NSX และ Data Center Interconnect

Data Center Networking ออกแบบ network สำหรับ modern data centers ที่ต้องการ scalability และ agility Spine-Leaf เป็น topology มาตรฐาน, VXLAN ขยาย Layer 2 ข้าม Layer 3 fabric, EVPN เป็น control plane สำหรับ VXLAN, Fabric ทำให้ network ทำงานเป็นหนึ่งเดียว, ACI เป็น Cisco’s SDN สำหรับ data center, NSX เป็น VMware’s network virtualization และ Data Center Interconnect เชื่อม DCs หลายแห่ง

Traditional three-tier architecture (core-distribution-access) ไม่เหมาะกับ modern workloads: east-west traffic (server-to-server) มากกว่า north-south (client-to-server) 80% → three-tier ออกแบบสำหรับ north-south → bottleneck ที่ aggregation layer Spine-leaf ให้ equal-cost paths ระหว่างทุก server pairs → predictable latency, easy scaling, no STP

Spine-Leaf Architecture

| Component |

Function |

Design Rules |

| Spine |

Forwarding layer — connects all leaf switches |

Every spine connects to every leaf (full mesh) |

| Leaf |

Access layer — connects servers, storage, firewalls |

Every leaf connects to every spine (full mesh) |

| Path |

Any server to any server = exactly 2 hops (leaf → spine → leaf) |

Equal-cost multipath (ECMP) across all spines |

| Scaling |

Add more leafs (more ports) or more spines (more bandwidth) |

Non-disruptive — just add and connect |

| No STP |

All links active — L3 routing (ECMP) instead of STP blocking |

Use routed interfaces or VXLAN for L2 extension |

| Oversubscription |

Spine bandwidth ÷ leaf uplink bandwidth → 1:1 = non-blocking, 3:1 = typical |

Design based on actual traffic patterns |

VXLAN (Virtual Extensible LAN)

| Feature |

รายละเอียด |

| Problem |

VLAN limit = 4,096 | Need L2 connectivity across L3 routed fabric |

| Solution |

Encapsulate L2 frames in UDP/IP → tunnel across L3 network → 16M+ VNIs (24-bit) |

| VNI |

VXLAN Network Identifier — like VLAN ID but 24-bit (16 million segments) |

| VTEP |

VXLAN Tunnel Endpoint — encapsulate/decapsulate on leaf switches |

| Encapsulation |

Original L2 frame + VXLAN header + UDP (port 4789) + outer IP header |

| Overhead |

50 bytes additional header → MTU must be 1550+ on fabric (or use jumbo frames 9000) |

| Use Case |

Multi-tenant data center, VM mobility across racks, L2 extension across L3 fabric |

EVPN (Ethernet VPN)

| Feature |

รายละเอียด |

| คืออะไร |

BGP-based control plane for VXLAN — replaces flood-and-learn with BGP advertisements |

| MAC Learning |

BGP distributes MAC/IP mappings → no unknown unicast flooding → efficient |

| Route Types |

Type 2 (MAC/IP), Type 3 (Inclusive Multicast), Type 5 (IP Prefix) — most important |

| ARP Suppression |

VTEP answers ARP locally (from BGP-learned info) → reduce broadcast flooding |

| Multi-Homing |

Server connected to 2 leafs (ESI-LAG) → EVPN handles active-active forwarding |

| Symmetric IRB |

Inter-VXLAN routing: route on both ingress and egress VTEP → scalable L3 routing |

| Standard |

RFC 7432 + RFC 8365 — vendor-neutral (Cisco, Arista, Juniper, Nokia all support) |

Cisco ACI

| Component |

Function |

| APIC |

Application Policy Infrastructure Controller — centralized management (cluster of 3) |

| Spine/Leaf |

Nexus 9000 switches — ACI mode (not standalone NX-OS) |

| Tenant |

Logical isolation — each tenant has own VRF, Bridge Domains, EPGs |

| EPG |

Endpoint Group — group of endpoints with same policy (like security zone) |

| Contract |

Policy between EPGs — define what traffic is allowed (whitelist model) |

| Bridge Domain |

L2 forwarding domain — like VLAN but with more features (ARP optimization, L3 SVI) |

| Multi-Site |

Connect multiple ACI fabrics across data centers — unified policy |

VMware NSX

| Feature |

รายละเอียด |

| Type |

Network virtualization overlay — runs on top of any physical network |

| NSX Manager |

Central management — configure logical switches, routers, firewalls, load balancers |

| Distributed Firewall |

Per-VM firewall enforced at hypervisor level — micro-segmentation |

| Logical Switch |

Virtual L2 segment — VXLAN-based, spans across physical hosts |

| Logical Router |

Distributed routing at hypervisor — no hairpin through physical router |

| NSX-T |

Multi-hypervisor (ESXi, KVM), multi-cloud (AWS, Azure), container support (Kubernetes) |

| vs ACI |

NSX = overlay (hypervisor) | ACI = underlay + overlay (physical switches + APIC) |

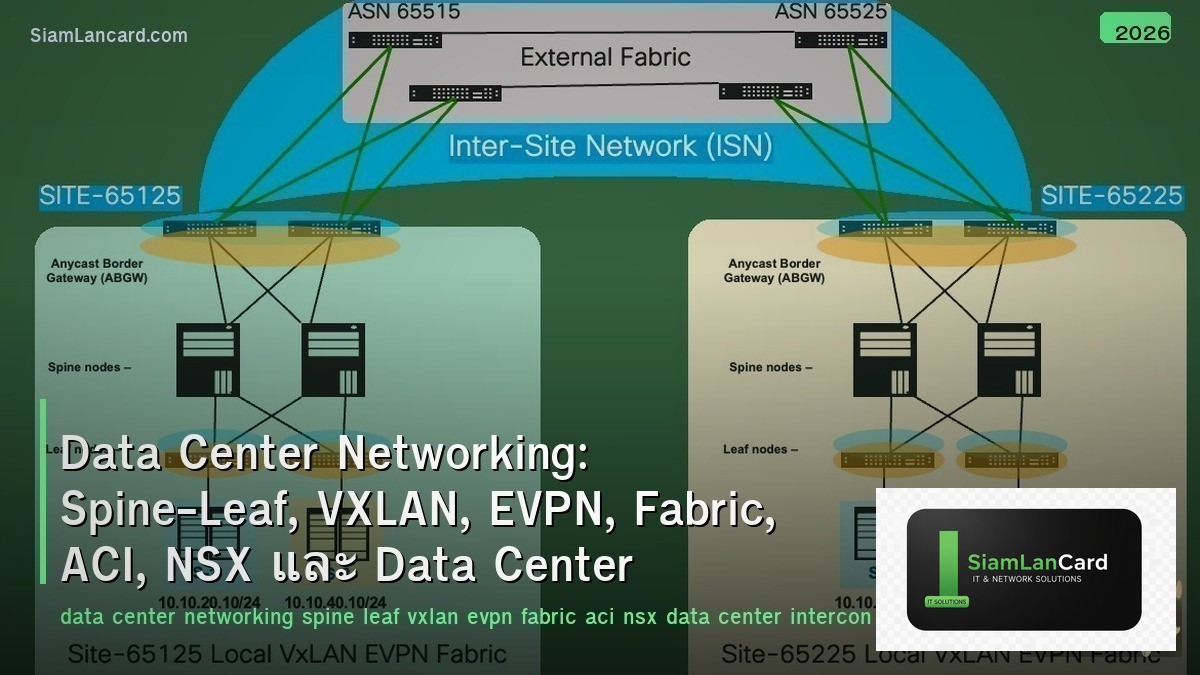

Data Center Interconnect (DCI)

| Method |

How |

Use Case |

| VXLAN over WAN |

Extend VXLAN tunnels between data centers → L2 stretch |

VM mobility, active-active DC, disaster recovery |

| EVPN Multi-Site |

BGP EVPN between DCs → controlled L2/L3 extension with anycast gateway |

Multi-DC fabric with independent failure domains |

| OTV (Overlay Transport Virtualization) |

Cisco proprietary L2 extension over L3 WAN |

Legacy DCI — being replaced by EVPN |

| DWDM |

Dense Wavelength Division Multiplexing — high-bandwidth optical between DCs |

High-capacity DC-to-DC links (100G-400G per wavelength) |

| SD-WAN |

Software-defined WAN for DC interconnect |

Cost-effective DCI over internet + MPLS |

ทิ้งท้าย: Modern DC = Spine-Leaf + VXLAN/EVPN + Automation

Data Center Networking Spine-Leaf: full mesh, 2-hop any-to-any, ECMP, no STP, easy scaling — replaced three-tier VXLAN: L2 over L3 tunneling, 16M VNIs, UDP 4789, 50-byte overhead, need jumbo frames EVPN: BGP control plane for VXLAN, MAC/IP distribution, ARP suppression, multi-homing, symmetric IRB ACI: Cisco SDN — APIC controller, tenant/EPG/contract model, whitelist policy, multi-site NSX: VMware overlay — distributed firewall (micro-segmentation), logical switch/router, multi-cloud DCI: VXLAN/EVPN multi-site (standard), OTV (legacy), DWDM (high bandwidth), SD-WAN Key: spine-leaf + VXLAN/EVPN is the standard — ACI or NSX adds policy automation on top

อ่านเพิ่มเติมเกี่ยวกับ Cloud Networking VPC Subnet Security Group Transit Gateway และ Network Virtualization NFV VNF Cloud-Native ที่ siamlancard.com หรือจาก icafeforex.com และ siam2r.com